Utkarsh_bauddh

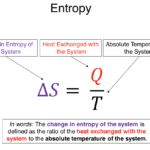

Entropy is a fundamental concept in physics, chemistry, and information science that describes the degree of disorder, randomness, or uncertainty in a system. It is most commonly associated with the second law of thermodynamics, which states that in an isolated system, entropy always tends to increase over time. This law explains why natural processes are irreversible and why energy transformations are never perfectly efficient.

In simple terms, entropy measures how spread out or disorganized energy and matter are. When a system is highly ordered, its entropy is low; when it is disordered, entropy is high. For example, a neatly stacked deck of cards has low entropy, but once the cards are shuffled, the entropy increases. Similarly, when ice melts into water, the rigid, ordered structure of ice becomes more random, leading to higher entropy.

In thermodynamics, entropy is crucial for understanding heat transfer and energy flow. When heat flows from a hot object to a cold one, entropy increases because energy becomes more evenly distributed. This is why heat never flows spontaneously from a colder body to a hotter one without external work. The concept explains why perpetual motion machines are impossible and why all real engines lose some energy as waste heat.

In chemistry, entropy helps predict whether a reaction will occur spontaneously. Reactions that increase the total entropy of a system and its surroundings are more likely to happen naturally. For instance, gases spreading out in a container or substances dissolving in water are processes that increase entropy.

Entropy also has a deep connection with time. The tendency of entropy to increase gives rise to the “arrow of time,” meaning time appears to move in one direction—from past to future. Broken objects do not spontaneously reassemble, and smoke does not return to a cigarette, because doing so would require a decrease in entropy, which is extremely unlikely.

Beyond physics, entropy is used in information theory, where it measures uncertainty or information content. In this context, higher entropy means more unpredictability in data. This idea is essential in fields like data compression, cryptography, and communication systems.

In summary, entropy is a measure of disorder, energy dispersion, and uncertainty. It explains why natural processes have a preferred direction, why energy systems are inefficient, and why the universe tends toward increasing complexity and randomness. Though it may seem abstract, entropy plays a central role in understanding how the physical world works, from everyday phenomena to the evolution of the universe itself.